Introduction

In this digital age, new interfaces for musical expression provide much broader musical possibilities than have ever existed before. There is a constant quest to be in harmony with one’s instrument so that music can flow freely from the imagination and take form effortlessly. This sparks an interest in new ways to interact with instruments, because we may be able to achieve more fluid methods for creating music. There are many new digital musical interfaces, but most are based on traditional musical instruments or are at least designed as a tangible object. This project aims to eliminate the physical “instrument” altogether. The sensor system enables the use of one’s own body as a musical instrument through detection of movement, freeing the artist from traditional requirements of producing live music. The ability to create and manipulate sound through movement provides the potential for immediate intuitive control of musical pieces.

Dance and music are quite obviously intertwined. One seems empty without the other. Dance and music deserve a close relationship, with no strings attached. The goal of this project is to blur the line between the two, and open up an avenue for them to mingle more intimately. Instead of dancing to music, it is now possible to create music by dancing. This project is a tool, an interface between motion and music, a new musical instrument. It is designed to be highly configurable to allow the artist as free a form of expression as possible. It is also designed to be fun and comfortable to use. This is a powerful tool and a fun toy.

High Level Design

User Interface

The user attaches four accelerometers and a wireless transmitter to his or her body to detect movement, which is used as input to the system producing music as an audio output. By wearing these sensors, the user gains physical control over musical parameters such as volume, pitch, timbre, and tempo through movement and dance. In addition to continuous control of musical aspects, the system can recognize gestures made by the user. Gestures can trigger musical events like playing an audio sample or non-musical events like switching between modes of operation. Multimodal operation is in fact a key design aspect to ensure maximum flexibility, so that the user can make decisions on the fly about what aspects of the musical piece to control and what types of movement control them. During a performance, mobility and independence is essential, so the user’s only input via this system is through the accelerometers. The design is such that all operations can be performed with no human-computer interface other than the wearable sensors.

Functions

An emphasis is placed on flexibility so that the sensor system may be used in multiple contexts. Therefore, the system can be configured for multiple modes of operation.

First, the system provides the ability to either create or manipulate music. The former mode of operation allows the user to essentially act as a solo instrument. The later mode allows real-time modification a musical piece played by either a computer or another live musician, much like a conductor uses movement and gestures to give direction to the performance of a piece.

Secondly, the system allows for either direct or indirect mapping of sensor data to musical parameters. The former mode of operation maps mostly unprocessed sensor data to musical parameters. The later provides a layer of algorithmic interpretation between sensor input and musical output, allowing the user to control musical parameters through cumulative multi-sensor movements.

There are many possibilities for configurable modes of operation, but the following table details the designations described above and lists some examples.

| Category | Create | Manipulate |

|---|---|---|

| Direct mapping | The user’s body is the musical instrument, with body movements directly creating sound. | The user’s movements are directly mapped to parameters of a musical piece, played either by a live musician or computer. |

| Example | Raising an arm could sound a note whose pitch depends on the acceleration of the arm movement. | A collaborative scenario could consist of someone playing an instrument while a dancer directly manipulates the timbre of the instrument through sensor-controlled filters. |

| Indirect mapping | The user’s movements control the creation of music via a selectable processing algorithm. | The user’s movements as a whole influence the performance of a piece of music. |

| Example | Overall arm movements could be pitch input and leg movements volume input to an algorithmic composition with dissonance input based on whether the arms or legs were doing similar movements. | Overall acceleration of all sensors could increase the tempo of a predetermined piece. |

System Level

This project is constructed with an embedded system and a general-purpose computer or other MIDI host like a synthesizer. The embedded system is composed of wearable accelerometers and a radio frequency (RF) transmitter run by the first microcontroller unit (MCU), with the RF receiver run by the second microcontroller that also generates MIDI output. The MIDI data is then used as input to a host program on a personal computer (PC) for further data interpretation and audio generation. Although using a PC as the MIDI host is a more flexible option, the design of the embedded system is able to act as a standalone MIDI controller for interaction with other industry standard MIDI equipment. The following diagram describes the system from a high level.

The green blocks represent hardware that is worn by the user. This may be referred to as the “dancer-side” hardware. The blue blocks represent the hardware that composes the base-station. The base station has a MIDI port which can connect either directly to a synthesizer or to a computer with a program such as Max/MSP. The base station is capable of basic motion analysis and is capable of sending MIDI note-on and note-off commands to control a synthesizer. However, for maximum flexibility, a program such as Max/MSP is required. When using the program, the base station sends commands containing raw data from the accelerometers in the form of Control Change MIDI messages. The dancer-side hardware communicates with the base station via a 2.4GHz wireless link.

Hardware Design

Sensors

The logical sensor choice for detecting motion are accelerometers. Kionix, a company specializing in accelerometers, provided us with samples of their KXP74-1050 tri-axis accelerometers. As dance requires the motion of the arms and legs, we decided upon a system using four accelerometers, one for each limb. Placing these accelerometers around a human body, however, requires a lot of cable. To minimize the cable lengths, we placed the rest of the dancer-side electronics in a central location, around the belt-line. As the accelerometers communicate via a Serial Peripheral Interface (SPI) capable of operating up to 5MHz, the line length may limit the operating speed of the SPI interface. The ATmega644V MCU is configured as the SPI bus master and the accelerometers are configured as slaves. The MCU periodically cycles through each of the accelerometers and records the instantaneous acceleration of each sensor. The measurements are taken very regularly to allow for the analysis of the motion through time.

Wireless

With the intent to create a dance-operated musical instrument, we realized that a wired system would be cumbersome and would detract from the overall experience. Connecting a wire to a dancer would also be dangerous, allowing the possibility for the dancer to trip. In order to make this system work well, it must be wireless. Before deciding upon a wireless link, we did a rough analysis to estimate the required data transmission rate for the wireless link. During the analysis, we kept in mind the necessity for a fast, responsive, and highly configurable system.

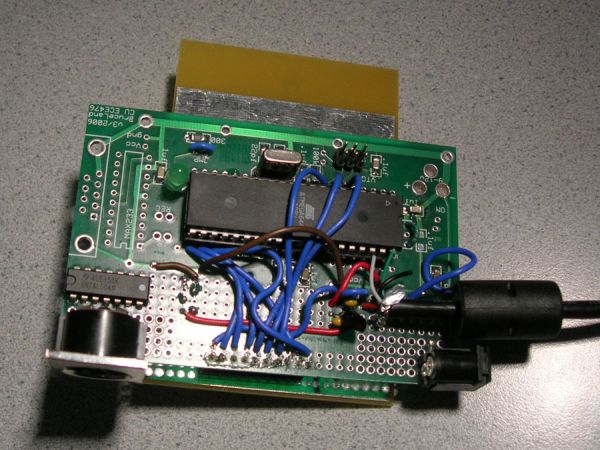

The data is intended to be used for motion capture or analysis, and as such, a fairly rapid sample rate is required. The human body, however, is not capable of moving at high frequencies, so a sample rate of around 60 Hz seems sufficient as a base minimum. To minimize noise and the amount of data required to be transmitted, only the most significant bits from the accelerometers are used. As we are using approximately one byte of data from each axis of each accelerometer, 12 bytes are required for each sample. At a sample rate of 60Hz, this comes to a minimum required baud rate of 5760 bps. The Radiotronix radios (RCT-433 and RCR-433), which are cheaply available and relatively easy to interface (thanks to Meghan Desai), fall short of this requirement and operate around 2400 bps. In reality, an even faster wireless link than 5760bps is required to allow the microcontroller sufficient time to gather samples from the accelerometers. The next cheapest radios happen to be Atmel radios, the AT86RF230. They have several large benefits including an impressive baud rate of 250kbps and a simple SPI interface. They require very few external components, a rare trait among 2.4GHz radios. Operating at 2.4GHz, they are within the global unlicensed band, requiring no special considerations to obey FCC regulations. However, the AT86RF230 radios pose several large challenges. No interface board is available for a reasonable price, which forced us to design our own board for the radios. Also, the package is a very tiny 32 lead QFN (Quad flat no-lead package) with a lead-pitch of 0.5mm, making hand-soldering tricky.

Due to the importance of the quality of the wireless link for this project, a lot of design work was put into the hardware for the AT86RF230. The circuit built is taken directly from the application schematic included in Atmel’s datasheet for the AT86RF230, http://www.atmel.com/dyn/resources/prod_documents/doc5131.pdf.

For more detail: A Wearable Wireless Sensor System using ATmega644V