I’m an Electrical Engineering major and each year my college’s branch of IEEE competes in a student hardware competition. Last year’s competition was inspired by the natural disasters in Haiti and Chile (the competition was held one week after the earthquake in Japan). This was a very large project that was tackled by a group of people. I was the programmer of the group but also helped with some of the sensors that the arduino would be directly interfacing with. I will only cover those parts that i was involved with, however i will briefly talk about the other parts so that readers can get a better understanding of the scale of the competition.

The playing course was meant to imitate a hotel that had been damaged during an earthquake. As seen below there were 4 rooms with a central hallway. In each room there could be up to 4 “Victims”, as well as various pieces of “debris” and a “Hazard”. The victims were composed a 3″ PVC cap with a magnetic coil, and an status indicator LED. the debris was various sizes of 2X4 & 1X1 lumber painted white. The hazard was a large magnetic field at a different frequency then the victims.One of the biggest challenges was being able to navigate the course with the possibility of your path being blocked by debris. That’s where I came in!

This is my first instructables(hopefully not the last). Unfortunately I wasn’t really planning to write it at the time ( I was to focused on the completion) so I don’t have any pictures of the robot under construction.I finally have some time to do a write up and the Microcontroller contest was just the incentive i needed to complete it. I’d really appreciate any comments and suggestions, and I’ll try to answer any questions you have. If you like my instructables please vote for me in the Microcontroller and/or Make It Move contest!

Step: 1 The Sensors

During our early meetings, we decided that the most efficient way to navigate was to follow the wall around the course, To do this we needed a way to keep track of not only the wall but any obstacles that could be placed anywhere around the course. We build a lot of robots and other project at my college so we know quite a bit about sensors. What we needed was a way to accurately measure the distance to the walls.

We considered both Infrared and Ultrasonic sensors, There are infrared sensors that can read the distance that we needed, however we didn’t know many details about the paint that was to be used on the course walls or ambient lighting, We were afraid that this could through off our distance readings. We decided on Ultrasonic sensors. There are dozens if not hundreds of ultrasonic sensor, but one of the most well known is the PING sensor from Parallax. We looked at several models but finally decided on the PINGs because they have the shortest minimum read distance of 2cm. This way we could hug the walls pretty closely.

The first thing we needed to do was figure out how many sensors we needed. We determined that we would need 2 sensors to square up against the wall. we also needed 2 sensors on the front for obstacle avoidance and navigation. We would also need sensors on each side of the robot to locate the victims. One of the requirements when finding the victims was to announce the victims location on an invisible X and Y gird in each room. To do this we would need to be able to figure out our distance to each of the 4 walls.(really we could do this with only 2 walls but 4 walls provided some redundancy.) In the end we used 7 sensors, 2 on the front, 2 on the right side, 2 on the back and 1 on the left side (we only needed 1 on the left to provide distance to the walls, the pairs are used together to make sure the robot is square against the wall either while turning or driving straight).

Step: 2 What is a PING sensor

Basically a PING sensor uses high frequency sound to accurately measure distance. They send out a pulse when sent a command and when the echo of that pulse returns, they send back a high signal to the microcontroller. Since the speed of sound is basically constant (it varies very slightly with temperature/humidity/elevation) the distance from an object can be calculated by the time it takes for the pulse to return. It takes 29 microseconds for sound to travel 1 cm, and 74 microseconds to travel 1 inch. But that is only one way. for the pulse to travel to an object and back to the sensor takes twice as long. Luckily for us the arduino language has a command built it that measures this for you, its called pulseIn. You can divide the results from pulsin by 58 for cm or 148 for inches or just use the raw microseconds as a judge of distance. For general purpose distance measurements I used the cm conversion, however for some portions of the code that needed sub-cm accuracy i used the microsecond values.

The gif below shows a dolphin sending out an ultrasonic pulse and it bouncing off of a fish and returning to the dolphin this is the same principle as a PING sensor

Step: 3 Finding the victims

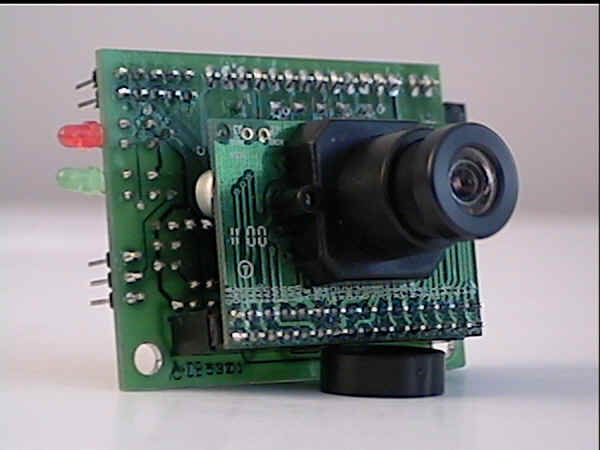

I mentioned earlier that I didn’t originally plan to make an instructables about our robot, so unfortunately The pictures don’t show the Camera module that we used. We ordered one early on but never received it, luckily the staff engineer who helped us a lot offered his own private camera to use during the competition, which we returned after the competition. We used a CMU-Cam 1 which was developed by students at Carnige Mellon University. We had a Parallax BasicStamp 2 controlling the CMU-Cam which we trained to only see the green light reflecting off of the white PVC caps. During early testing with the CMU cam we noticed that white light would cause false readings even on black surfaces when close enough. We were worried that if glossy paint was used it would cause false readings and severely slow us down. The rules said the walls would be purple, so we decided to find a color that would be absorbed by the walls to prevent problems. We had an assortment of different color LED clusters from another project and realized that the green was the best choice.

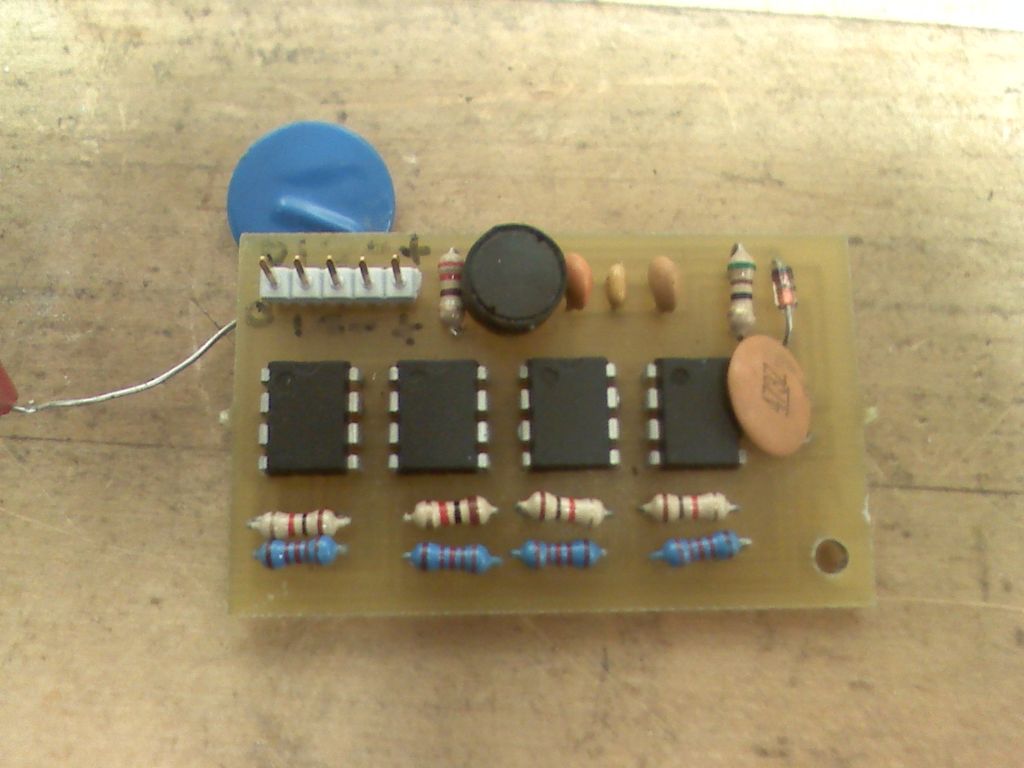

Unfortunately, the obstacles were also painted white (they didn’t want to make it to easy). That is where the EM-Field sensors came in. Each victim produced an Electromagnetic field which would distinguish them from the obstacles. Using the knowledge we gained in our early electronics courses and EM fields class, We were able to build a fairly simple EM field detector that we tuned to only detect the victims, and a second to only detect the Hazard field. The sensors are based on the fact that if you expose a wire to a varying magnetic field, it will induce a current in that wire. This current also produces a voltage based on the resistance of the coil, which was very small. We hand wrapped our own coils around some PVC pipe. We then amplified that many times with OP-Amps. Next we passed the signal through a Band pass filter which only allowed the frequency of the victims to pass. Finally the signal was passed though a rectifier which converted the AC signal into a DC signal. Both of these circuits output an analog voltage that we tied to the Mega’s Analog port.

Once a victim was found, the CMU-Cam was programed to switch to detect the status of the LED on the victim. By taking a series of pictures fast enough, We could determine if the LED was always on, always off or blinking. This indicated the status of the victim (unconscious,dead,alive)

For more detail: Autonomus Wall Following Obstacle Avoiding Arduino Rescue Bot