INTRODUCTION

Wouldnt it be cool to be this guy?

Powerful laser shining into the audience, playing strings by sweeping your hands across the beams, rocking out in a room full of fog and fawning girls?

We thought so. It turns out lasers are expensive, fog machines arent allowed in lab, and fawning girls experience some sort of force field repelling them away from Phillips Hall and toward Waiters concerts. But playing strings by sweeping our hands around was still an option!

We resolved to build the poor mans laser harp, using LED emitters shining across a set of sensors that would notice when we blocked them with our hands. It needed to play tones that sounded sweet and lovely when we needed it for ballads, and hard-edged and techno when we were feeling the dirty nerdy groove. It needed to play several notes at once and listen to a remote control. Most of all, it needed to have a 3.5mm jack so that someday we could live the dream, playing it in that room next to that guy, fog machines and all.

HIGH-LEVEL DESIGN

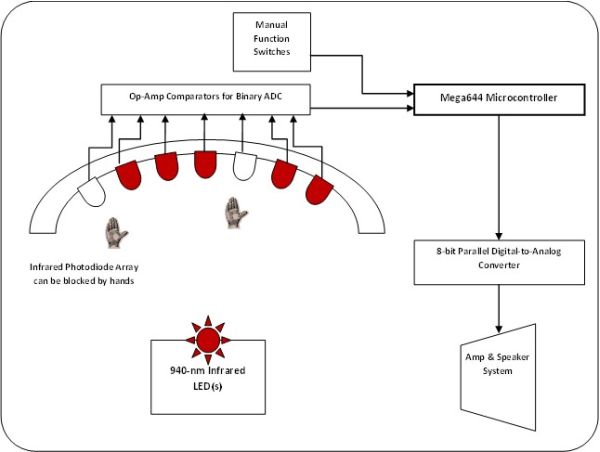

LEDzeppelin is designed to listen to two input sources, strings and remote control buttons, to control and send an 8-bit audio wave through a digital-to-analog converter to an amplified sound system.

The microcontroller polls comparators and switches every 50 milliseconds for user input, generating sound using Direct Digital Synthesis with steps every 128 microseconds; see the Hardware Design and Software Design sections for further detail and for tradeoffs between the two.

SOFTWARE DESIGN

The central question in the LEDzeppelin software design was this:

Given a set of logical inputs representing string plucking, and another representing chord buttons, how could we generate an 8-bit parallel digital signal representing interesting music?

Direct Digital Synthesis

Direct Digital Synthesis (DDS) is a general method of producing a repeating waveform using a digital output. Our first experience with DDS was a previous lab in this course in which we constructed a Dual-Tone Multi-Frequency output signal to mimic a telephone dialer; this concept was extended in several ways in the software for LEDzeppelin.

The general idea of DDS is to recreate an arbitrary waveform by repeatedly running the output through a table containing one period of that waveform. The speed at which the output traverses the table controls the frequency of the output wave, and different frequencies can each run through the same table independent of each other.

We programmed our DDS to run through the table by adding an increment value to an 32-bit accumulator every time our primary timer overflowed. In order to give the microcontroller time to calculate a complicated wave output before another interrupt service routing was called, we scaled the 16MHz clock into the 8-bit timer back by a factor 8, so it overflowed every 128 microseconds (about 2000 clock cycles). Meanwhile, this ISR method allowed the signal to move through the table at a constant rate, leaving the increment variable a constant multiple of the tone frequency (see below). Table 1, below, contains the notes we chose to play, their frequencies, and the corresponding increments. Note that the top increments move through the full range in about 7-8 cycles, which is more than enough to get frequency and waveform; in other words, the 7.8kHz switching frequency is high enough above the maximum output tone frequency of 1.3kHz. (This also means it wasnt completely necessary to use a long variable, but it ported well from our previous code, and it provided the maximum possible table resolution.)

Making it Interesting

The above system would allow us to play one note at a time, on one waveform, with one amplitude. Not good enough for an audience not good enough for us.

A real harp wouldnt be very interesting if it silenced previous strings when a new string was played. We needed to make our instrument polyphonic, so it could play several tones independently. This means cutting up the output space and devoting one accumulator to each chunk, adding them together for the final signal.

Since we were willing to sacrifice only one port to output, we were limited to an 8-bit signal value; this meant that adding more tones available cut into the amount of resolution available to each. Also, we planned to use a signal envelope for each tone, which meant waveforms couldnt suffer from poor resolution even when their peak-to-peak amplitude is low. We chose to make four tones available at any given time, allowing each a respectable 64 possible values.

Unfortunately, there are five strings and only four accumulator/increment sets. If there were only four, then each string could have a dedicated accumulator and increment, and that would be that; adding a fifth string means that when five strings are played in a row, the microcontroller must reallocate part of the output space to the newest string. Since we were already timing each tones age for the signal envelope, we could take the increment from the oldest tone and give it to the newest one, effectively decoupling accumulator[0] from a particular string and instead assigning it where it was needed at a particular time. This required some sleight-of-code to find the oldest string and check if a particular string is already playing to prevent assigning multiple increments to a single string.

It might have been easier to just throw another DAC onto the system or decrease the resolution of the single-DAC output space, but our code was designed to be modular and scalable, so we could add more hardware strings with only one change in code (the defined ALLSTRINGS constant). If we had chosen one of the other routes, we would lose either sound quality or ports on the microcontroller that could be used for more strings. (We could extend our system to have a maximum of 13 strings if we had the money and time; programming checks showed that the processor is easily handling the amount of polling its currently doing and could do more.)

Besides multiple strings, we utilized a benefit of using DDS for sound generation: different waveforms can be introduced with very little code. We made different instruments for the harp using four common waveforms and a fifth, experimental form (see below).

The user can use a button on the remote to select between sine, square, triangle, sawtooth, and Gaussian waveformssine waves for that sweet, angelic sound; triangle waves with a bit more edge; sawtooth for that electro-lumberjack feel; square waves for their harsh, digital beauty; and the Gaussian, which is software-tunable for pluckiness. (Try it, were serious.) Each wavetable is calculated during the software initialize phase, so the microcontroller never has to do any floating-point operations during interrupt service routines.

During testing, we noticed that our ability to rock out was being restrained by the envelope we had built: a linear ramp from maximum amplitude to 0 that took 2.56 seconds, multiplied and normalized inside the interrupt service routine. The chords and notes were playing, but the difference in amplitude between 0.0 seconds after plucking and 0.5 seconds after plucking was nearly inaudible, which meant rapid-fire plucking of a single note sounded muddy. In response, we updated our envelope. Synthesizer terminology talks about Attack-Decay-Sustain-Release, but we kept an immediate attack for responsiveness, decayed very quickly for about 90ms, and released gently over another 1.5 seconds, which made the instrument much more playable. The envelope can be seen below:

Finally, we recognized at the outset that the point of a harp is to sound good while strumming; we decided that each remote control button would reset all the strings to a note in an arpeggio, rather than a scale, so we could strum all the notes at once and still sound musical. A currentNote array improved on our previous design to keep the strings from shifting pitch until they have been re-plucked afterwardof course, if the musician likes the otherworldly, digital-alien feel of shifting patterns, then he or she can cover the sensor indefinitely, which the microcontroller interprets as re-attack, and click through the chords. (In our initial design, we debounced the strings to sustain originally but decided we liked this feature more.)

HARDWARE DESIGN, IMPLEMENTATION, AND DEBUG

The hardware used in the making the LEDZeppelin harp can be safely divided into three subsections: User Sensing, Remote Control, and Digital to Analog Conversion.

USER SENSING

In order to simulate a harp using no strings, it was important to determine when the users hands were placed anywhere along the spectrum of possible pitches available in a method similar to an Autoharp. The method for determining string plucking was based upon sensing shadows caused by interrupting beams of infrared emissions. The infrared light chosen as the simulated strings was 940 nm waves, longer than visible to the human eye.

The primary hardware components involved with sensing the users hand movements were Light Emitting Diodes (LEDs) programmed to emit 940 nm light waves, and infrared light-sensing silicon photodiodes.

Parts List:

| Part | Cost/part | Number Required | Total Cost |

| Mega644 Microcontroller |

8 |

1 |

8 |

| 12V Power Supply |

5 |

1 |

5 |

| STK500 Development Board |

15 |

1 |

15 |

| Whiteboard |

6 |

2 |

12 |

| Pushbuttons |

1.04 |

7 |

7.28 |

| Plastic Remote Case |

2.69 |

1 |

2.69 |

| Infrared LED |

1.99 |

2 |

3.98 |

| Infrared Photodiode |

0.6 |

10 |

6 |

| Digital-to-Analog Converter |

1.59 |

1 |

1.59 |

| Scrap Wood |

0 |

1 |

0 |

| Scrounged Lab Stand |

0 |

1 |

0 |

| Scrounged Double Op-Amps |

0 |

6 |

0 |

| Scrounged Potentiometers |

0 |

5 |

0 |

| Scrounged Sound System |

0 |

1 |

0 |

| Scrounged 3.5mm Audio Jack |

0 |

1 |

0 |

| Scrounged Wire (in miles) |

0 |

17 |

0 |

| Total: |

61.54 |

For more detail: IR harp using Atmega644