Our project implements a touchpad input system which takes user input and converts it to a printed character. Currently, the device only recognizes the 26 letters of the alphabet, but our training system could be easily generalized to include any figure of completely arbitrary shape, including alphanumerics, punctuation, and other symbols. A stylus is used to draw the figure/character on the touchpad, and the result is shown on an LCD display. Pushbutton controls allow the user to format the text on the display.

We chose this project because touchscreens and touchpads are prevalent today in many new technologies, especially with the recent popularity of smartphones and tablet PCs. We wanted to explore the capabilities of such a system and were further intrigued by our research into different letter-recognition methods. Finally, we have had previous course experience in signal processing, computer vision, and artificial intelligence; we feel that this project was an excellent way to synthesize all of this knowledge.

Upon completion, we decided to extend our project by interfacing it with a project created by another group. Jun Ma and David Bjanes created a persistence-of-vision display; we use a wireless transmitter to send text which is then shown on the display. The same pushbutton controls may be used to format the text on both the LCD display as well as the POV display.

High Level Design

Rationale

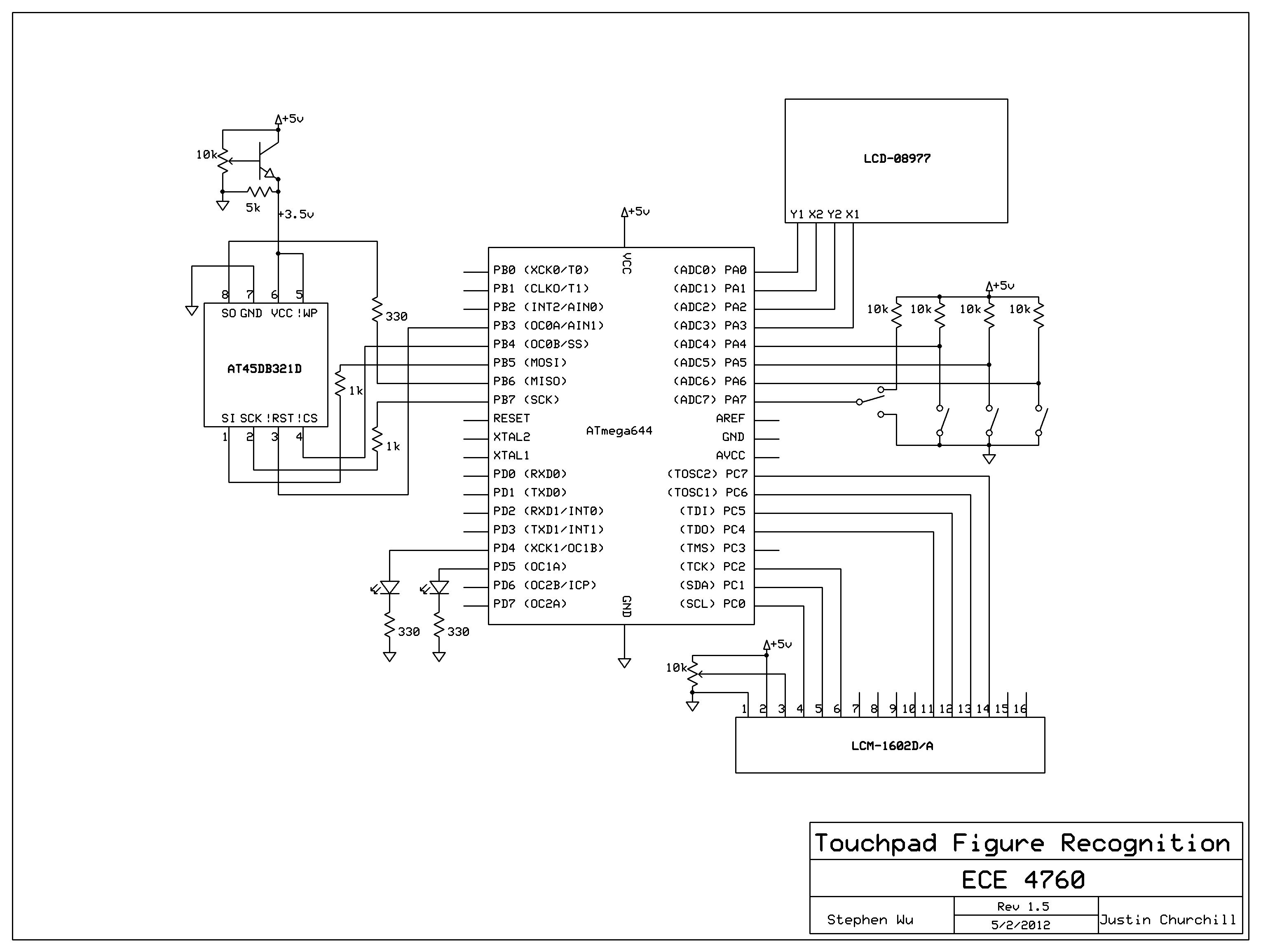

The rationale for the design is primarily to demonstrate the ATmega644’s processing ability – the classification process requires a large number of matrix multiplications as well as many accesses to Flash memory. Furthermore, in order to get an accurate result we had to run the ADCs at a rate of at least 1 kHz. This limited the number of samples that we had to compute the output.

We also attempted to make the interface as user-friendly as possible. This was achieved by creating a placeholder for the touchpad so it would not slide around when in use, adding a cursor to the LCD, and giving the user the ability to delete misclassified characters via a pushbutton. We also used a Nintendo DS stylus so that the user does not need to use a fingernail to press on the touchpad, which can be difficult and possibly dangerous.

The success of our design could lead to further devlopment along the lines of signature verification or other handwriting analysis tools.

Logical Structure

Our program is fairly linear and follows a rather logical structure. Steps of operation are as follows.

- User writes a character on the touchpad when the MCU indicates that it is ready.

- The MCU captures the data and cleans it up (see Software section for more details).

- The captured drawing is passed through the neural network. This requires many sequential accesses to Flash memory.

- The neural network returns the classified character and it is printed on the LCD display.

- Repeat from Step 1.

At any time during this process, the user can also interact with the three pushbuttons to change the contents of the LCD display.

Background Math

The crux of the software surrounding this project was the A-Z letter classifier. Many possible methods were discussed and tried in MATLAB before settling on the final design. The classifier needed to be:

- Fast. It is unacceptable if the user has to wait any more than a few seconds after inputting each letter.

- Reliable. It must be relatively sure of its classification decision when it makes one – we do not want small fluctuations in the input to cause incorrect classification.

- Robust. There do not exist any reasonable worst-case inputs which cause the classifier to perform poorly.

Cross-Correlation

We first discussed the idea of cross-correlating the input with an alphabet of prototypical examples. The winner would be the one most highly correlated. This had speed issues, since cross-correlation of an input with an example is costly – O(n4) – and would have to be done 26 times to get an answer. Additionally, we had seen little literature on this method. A desire to implement something more interesting drove us away from this solution.

SVM

We put in significant effort to decide the value of the second algorithm we considered – a support vector machine (SVM). This method splits the cleaned and normalized (normalized this time to 100-by-80) drawings into 80 boxes – 10-by-8. Each box has a value which is equal to the average number of pixels filled in that box. Each value represents the location of the drawing along the dimension for that pixel in an 80-dimensional space (1 dimension per box).

More values can be created which are functions of the other values to raise the dimensionality of the problem. This shapes the location of the points in space to cause the classifier to perform better. We experimented with this, but could not succeed in finding improvements by using it.

Using these steps, a prototypical alphabet of 26 80-dimensional vectors was created by applying the method above to all training sets and averaging them. Classification of a new input then amounts to turning the drawing into a point in the 80-dimensional space and finding the nearest point representing a letter.

This method is extremely fast, requiring only 26 calculations of 80-dimensional distance. It was even relatively accurate – approximately 91% of all letters from the test set were correctly classified under a set of cross-validation tests. However, we were very uncomfortable with the reliability of the output. Closely examining the results, we found that many letters which were correctly classified were only just barely determined to be the winners. A graphical depiction of the SVM’s performance is shown below.

Parts List:

| Item | Source | Unit Price | Quantity | Total Price |

|---|---|---|---|---|

| ATmega644 (8-bit MCU) | ECE 4760 Lab | $6.00 | 1 | $6.00 |

| ATmega644 custom PC board | ECE 4760 Lab | $4.00 | 1 | $4.00 |

| 9V DC power supply | ECE 4760 Lab | $5.00 | 1 | $5.00 |

| Lumex LCM-1602-D/A (16×2 LCD) | ECE 4760 Lab | $8.00 | 1 | $8.00 |

| AT45DB321D (serial Flash) | Digikey | $4.30 | 1 | $4.30 |

| Nintendo DS Touchscreen | Sparkfun | $9.95 | 1 | $9.95 |

| Touchscreen breakout board | Sparkfun | $3.95 | 1 | $3.95 |

| 6″ solder board | ECE 4760 Lab | $2.50 | 1 | $2.50 |

| 2″ solder board | ECE 4760 Lab | $2.00 | 2 | $4.00 |

| Pushbutton | ECE 4760 Lab | free | 3 | $0.00 |

| Nintendo DS stylus | previously owned | free | 1 | $0.00 |

| Header pin | ECE 4760 Lab | $0.05 | 58 | $2.90 |

| Resistor | ECE 4760 Lab | free | 8 | $0.00 |

| NPN transistor | ECE 4760 Lab | free | 1 | $0.00 |

| Potentiometer | ECE 4760 Lab | free | 2 | $0.00 |

| LED | ECE 4760 Lab | free | 2 | $0.00 |

| Metal screw | ECE 4760 Lab | free | 4 | $0.00 |

| PVC tubing | previously owned | free | 1 | $0.00 |

| Plastic casing | previously owned | free | 2 | $0.00 |

| Wire | ECE 4760 Lab | free | a lot | $0.00 |

| Plywood board | previously owned | free | 1 | $0.00 |

| Total | $48.60 |

For more detail: Touchpad Figure Recognition Using Atmega644