Introduction

In this project, ultrasound around 24kHz was used to detect movement near an object. Waving a hand or other solid object near the source of the ultrasound (speaker) causes a shift in the frequency of the sound, which is then detected by a microphone. We detected characteristic shifts in frequency to determine whether motion was towards (push) or away from (pull) the microphone. Two modes of visual indication were used to display the results of the motion detection: blinking LEDs and a computer display. With the blinking LEDs, a different color LED would light up according to the direction of motion detected. In the computer animation, the waveform would be displayed on screen along with markings of which parts are pulls and pushes. Sections of the waveform that represent different motions would be marked as push or pull.

We chose this project because we were inspired by Microsoft’s Sound Wave, which detected shifts in frequency using the Fast Fourier Transform, and used it to perform actions such as scrolling on a computer screen. We were interested in the effects of motion on the frequency produced by a speaker and detected by a microphone. We thought the project could be useful in situations where visible light-based detection fails. For example, in a completely dark room, changes in ambient light cannot be used to detect changes in motion. This detection system is also useful in that it allows detection of motion without having the system get used a certain ambient level. For example, in infrared-based detection, the system has to first get used to certain ambient level of infrared radiation. Any change from that level will then trigger the detection. In ultrasound, we make detections purely on motion and so no ambient level is necessary.

We were able to successfully complete two different detection algorithms and are pleased with our achievements.

High Level Design

Logical Structure

Like the system designed by Gupta, Morris, Patel and Tan at Microsoft Research, our system also relies on the doppler frequency shift in a reflected sound wave for gesture recognition. This design works as follows. Firstly, sound is produced by feeding a periodic square wave through a piezoelectric transducer at a frequency that is above the audible range of humans. When the user moves, these sound waves are frequency-shifted because of the doppler effect. These sound waves are then picked up by the microphone in our hardware set-up. The hardware compares the incoming signal from the microphone with the one issued through the piezoelectric transducer; frequency changes are calculated in the analog domain in the hardware. The calculated frequency changes are then sampled by the Analog-to-Digital-Converter (ADC) of the microcontroller. Based on sampled data, the microcontroller interprets the voltage level changes to determine what action the user had performed, and reports these actions as either a push or a pull. Two settings are provided:

- A real-time action recognition mode where the microcontroller attempts to determine and report user actions

- A delayed action recognition mode where the user is given a period of approximately 5 s to perform actions; the microcontroller records all data and then proceeds to identify actions based on the data and reports back to the user

Rationale

Why did we choose to compare incoming and outgoing (from the point of view of the microcontroller) signals in hardware rather than calculating the Fast Fourier Transform (FFT) and finding amplitudes as carried out by the researchers that inspired this project? FFT is a computationally-intensive process that the microcontroller is unlikely to be able to process while sampling the the incoming signal at a constant high frequency. Since we wanted to generate a sound that is inaudible to humans, it has to be high-pitched, at least above 20 kHz. To sample this high frequency, the Nyquist sampling theorem suggests that we would have to sample at at least 40 kHz in order for the original signal to be reconstructable. The microcontroller’s processor clock runs at 16 MHz and 40 kHz would be possible to sample; the 16 MHz processor could probably perform the FFT on samples. However, the microprocessor would not be able to both sample and compute the FFT efficiently. We therefore had to do manipulate some part of the signal in hardware so that both sampling and computation could be done effectively on the microcontroller.

The research that inspired this project worked on the computer, which has a much higher processing power than the microcontroller, and hence they were able to perform a lot of the calculations on software instead of hardware. Using hardware to manipulate data makes this system much more difficult to tune. However, manipulation in the analog domain, as we learnt in this project, is both fast and powerful, and presents an interesting alternative for future development.

Background Math

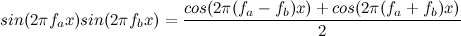

The crux of the hardware manipulation is in the multiplier unit. We really appreciate this suggestion from Professor Land! The usage of the multiplier is based on the following equation, which indicates that the product of two sine waves produces two phase-shifted sine waves, one with a frequency that is the difference in frequencies between that of the two input waves, and the other with a frequency that is the sum of the two input waves:

We exploit this magical yet fundamental property of sine waves to find the difference in frequency between incoming and outgoing signals in hardware. Initially, we predicted that the frequency shift in the incoming sound wave from that of the outgoing sound wave is going to be on the order of 500 Hz, assuming that the user’s hand can move at a speed of 10 m/s. (This was later found to be an unreasonably high frequency shift to expect.) When we did out preliminary calculations, we set the outgoing signal to be at a frequency of 24 kHz. This meant that the incoming frequency, if the user were moving away, would be about 23500 Hz. If the incoming and outgoing signals were multiplied, the frequency difference (fa-fb) would be 500 Hz, which we want to sample, and the sum (fa+fb) would be 47500 Hz, and is easily filtered away using a low-pass filter. Since the frequencies are so different, the low-pass filter would not be too difficult to tune.

In addition, the low frequency signal of about 500 Hz (at most) would be easy to sample, because it would only have to be sampled at a low frequency of 1 kHz to be reconstructable.

Hardware/Software Tradeoffs

In this project, at many points we had to make the decision of whether to process data in hardware, or in software. Some decisions were clear, such as whether to use FFT in software, or the multiplier in hardware – the microcontroller would not have been fast enough. Other decisions were not so clear.

For example, the input signal was often noisy. We could have used a low-pass filter in hardware, or used a running-average filter in software to remove the noise. In another situation, the output signal had a DC offset that changed according to the environment. If the hardware was placed in an open area, the DC offset would be lower, and if the hardware was placed under something that reflected most of the sound back to the microphone, the DC offset would be higher. We could use a blocking capacitor in hardware and then add another section in the circuit to pin the middle voltage of the input signal to 2.5 V before it is sampled by the ADC, or used a long-term averager in software to determine the average level.

In the first case, we chose to implement the low-pass filter in hardware because the noise would have obscured most of the signal that we wanted to capture. The running-average filter in software is inferior in this case mainly because without using floating-point arithmetic, the filter would be rather inaccurate, and if we had used floating-point arithmetic on that many samples, the microcontroller may not sample quickly enough. In hardware, the low-pass filter quickly and accurately removes high frequency signals, and presents the ADC with a cleaner signal that is more easily interpreted in software.

In the second case, we also used hardware to shift the middle of the signal. An average in software, if taken over a short period of time, would be affected drastically by the signals that we wanted to capture. If we wanted to take an accurate long-term average, it would not only require a lot of memory, but will also take a long time to react if the system was suddenly shifted and had to adapt to new conditions. By using hardware to shift the DC level of the middle of the signal, we could get fast shifting under changing conditions, without paying in memory.

Standards

We used the UART standard in communications between the microcontroller and the computer, for display purposes.

Patents, Copyrights, Trademarks

We acknowledge the inspiration from the Microsoft SoundWave project for their idea in using the Doppler Effect to sense motion. The core of their gesture-detection system lay in the FFT, which allowed them to compare frequency shifts. We compared frequency shifts using a multiplier unit instead of using FFTs. No infringement of their copyright is intended.

Numerous patents exist for the use of light for motion sensing but these are not that closely related, since our project relies on sound rather than light for motion detection. Patents exits for ultrasound motion detection system, such as patent number 4604735 granted to Natan E. Parsons for a ultrasonic motion detection system that is applied on faucets. Ultrasonic Doppler-based sensing has also been done before on blood-flow, by Donald Baker. This was presented in a paper in IEEE Transactions on Sonics and Ultrasonics in July 1970.

A Google scholar search on the terms “ultrasonic gesture doppler” returns several previous works on the use of ultrasound and the doppler effect for gesture sensing. However, none of these works were referenced in the course of the project. The use of the multiplier to discriminate frequencies and detect motion, as well as the use of the saturating counter and a simple majority in the decision making process for detecting pushes and pulls based on amplitudes detected, is novel to be best of our knowledge at the point of writing.

No copyright infringement is intended in the execution of this project

Hardware

Microcontroller

The microcontroller used was the Atmel 1284p microprocessor. The microprocessor is mounted on a printed ciruit board that was issued to us at the beginning of the semester. The printed circuit board gave us easy access to all 32 pins of the microprocessor. The printed circuit board was not rebuilt due to lack of time, also, use of the issued circuit board made debugging much easier. The printed circuit board also had a USB connector, which provides us easy access to terminal output through UART.

Microphone

The microphone used was a normal microphone for the auditory range. It was purchased for DigiKey.com and had the model number CMA-4544PF-W. It was chosen because the price was within budget. We were initially not sure if the microphone would be able to receive sound at frequencies above the auditory range, but we took the risk and tried things out. We first tested this out by putting a sine wave through the piezoelectric transducer, and testing the response at various frequencies above our hearing range. Above 20 kHz, 24 kHz seemed to have a good response and we chose to use that frequency.

Blocking Capacitor

The blocking capacitor was used after the microphone to cut out the DC offset of the microphone bias, so that the higher frequency signal would pass through.

Amplifiers

Amplifier circuits were used in two places in our hardware to amplify the signal that we were trying to isolate.

Initially, the rails of the amplifiers we used were set at +5 and -5 V. One thing that we learnt about this configuration was that our voices and other background noises such as tapping on the table were amplified so much that the the signal reached the rails. Because it reached the rails, the frequency of those signals were removed and became zero. Since those frequencies were removed, they were not passed to the later hardware stages and hence was not sampled into the microphone. This is an interesting way of filtering out speech and other noise from our signal.

However, the tradeoff was that the eventual signal was very small, which made the signal-to-noise ratio very low. Since we wanted to have a large signal-to-noise ratio, we decided to raise the rails to the +12 and -12V in our eventual implementation and chose to remove any residual noise using filters.

High-pass filter

The high pass filter before the multiplier was used to cut out the lower frequency signals, which must have been noise. We were expecting the incoming signals’ frequencies to vary between about 23 kHz and 25 kHz at most, and so none of the lower frequency signals would have been useful. Hence, cutting these signals out before the multiplier makes sense.

Multiplier

This is the core unit in our hardware maniplation. As described above in the section on Background Math, we used the multiplier to calculate the difference and sum between the incoming and outgoing frequencies. The multiplier has the model number AD633 and was also purchased from DigiKey.com. This multiplier was found to be very reliable and use was almost just plug-and-play.

Low Pass Filter

This low pass filter after the multiplier forms part of the core in the signal manipulation path. The purpose of this low-pass filter is to remove the high frequency sum frequency of incoming and outgoing signals, thus leaving only the low-frequency difference frequency of the incoming and outgoing signals. This difference in frequency is exactly the Doppler shift that we were looking for.

This difference between frequencies tells us only the magnitude of the shift, and not the direction of the frequency shift. However, from the waveforms sampled, we noticed characteristics in the amplitudes that correspond directly to either a push or pull motion. These characteristics are exploited to determine either a push or a pull, and is further explained in the Software section. Initially, we wanted to further add a pair of filters, one with a passband peaking at about 23500 Hz and the other with a passband peaking at about 24500 Hz. By comparing the amplitudes of the signals passed by both of these filters, we would be able to tell which way the frequency shifted and therefore the direction of the motion. Eventually, this was not implemented since it seemed sufficient to make decisions based on the amplitudes. This can be implemented in future if we have more time to improve on the project and accuracy of detection.

Second Low-pass Filter

This low-pass filter was added because we noticed that after the second amplifier, voice and surrounding noise was still being passed through as very high frequency noise. These high frequencies were effectively cut out with the addition of this low-pass filter, resulting in a much cleaner signal.

Unit to prepare signal for ADC input

We noticed that the DC offset level of the signal at the point after the second low-pass filter was changing with the surrounding environment. Because the ADC required the signal to center around a fixed voltage for accurate detection, we had to find a way to hold the DC offset constant regardless of environment. This unit was added to pin the DC offset level of the signal at 2.5 V, which is the middle point for the microcontroller’s ADC to sample.

Capacitor coupling to ground

At this point in the signal path after the signal was prepared for ADC input, we noticed spikes appearing in the signal. These high frequency spikes seemed to be coming from the microcontroller, because when we measured the signal when disconnected from the microcontroller, the spikes disappeared. To remove these spikes for a cleaner signal, we added a capacitor coupling to ground.

Parts List:

| Item | Quantity | Total Price |

|---|---|---|

| Breadboard | 1 | $6.00 |

| Solder board | 1 | $2.50 |

| Power supply | 1 | $5.00 |

| Speaker CDM-20008 (did not use this eventually) | 1 | $2.18 |

| Microphone CMA-4544PF-W | 1 | $0.96 |

| Multiplier AD633 | 1 | $9.89 |

| DIP socket | 6 | $3.00 |

| SIP socket | 8 | $0.40 |

| DIP socket for 1284p | 1 | $0.50 |

| ATmega1284p | 1 | $5.00 |

| USB connector for custom PC board | 1 | $1.00 |

| SIP plug | 36 | $1.80 |

| Custom PC board | 1 | $4.00 |

| Total | $42.20 |

For more detail: Ultrasound Gesture Detection Using Atmega644