Introduction

BlindAid is a portable tool that reads Braille and signals close objects. It is ideal for those unfortunate people who just turned blind and have not mastered Braille reading and blind cane usage. It can also be used as a learning instrument that helps the user decipher Braille without constantly going to the Braille dictionary.

High Level Design

Rationale

After browsing through the website on previous years projects, we believed that it would be a noble act of applying the knowledge we learned from class to help those who are in need, instead of just developing a toy that only satisfies our technology savvy.

Since most Braille we see on the street has standard size. Instead of image sensing, we decided to use 6 push buttons in a 2×3 matrix. When the buttons are pressed against the Braille, the buttons corresponding to the bumps on the Braille will be pushed.

The push button design not only makes our project more simple and elegant, it also makes it more affordable to the blind people.

Since our product is targeted to blind people who didnt master the Braille reading, we assumed they are new to the blind walking stick as well. Therefore, we attached an IR sensor to detect whether there is any object close to the user, in hope to reduce the chance of any unfortunate collisions.

the horizontal and vertical spacing between dot centers within a Braille cell is approximately 0.1 inches (2.5 mm); the blank space between dots on adjacent cells is approximately 0.15 inches (3.75 mm) horizontally and 0.2 inches (5.0 mm) vertically.

Since it is hard to recognize word by just hear its spelling. We implemented our BlindAid such that when a full word is inputted, it will pronounce the word when speak Button is pressed.

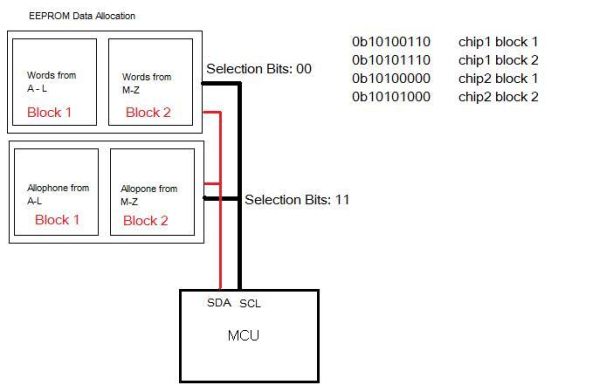

In order to increase the range of words the reader is able to pronounce, we decided to use allophones for speech generation instead of pre-recorded voices.

Hardware Tradeoff

When expanding the memory size for the larger dictionary, the time it takes to load entries from the dictionary onto the chip also increases proportionally. Fortunately, once the data is written on to eeprom, it remains there so the data from the dictionary only has to be written there once.

Software Tradeoff

One of the issues was speed vs. coverage. We wanted our Braille reader to be able to handle as many words as possible but the search time increases as the dictionary size increases, because of our linear search method. We felt coverage was the most important aspect of our project, which was one reason we decided to expand from the 32K flash memory to the 2MB eeprom memory.

Relationship of design to IEEE, ISO, ANSI, DIN, and other standard

Our project conforms to all IEEE standards to the extent of our knowledge.

Existing patents, copyright, and trademarks

Our project does not violate any existing patents, copyright and trademarks. The designs are all our original designs and any code or data used were open to the public for research purposes and are referenced in the appendix. The BlindAid image above was made by us using Photoshop.

Hardware

Main Components

Protoboard This is the skeleton of the BlindAid. It holds the Mega32 and supports links to other components.

SpeakJet This is the messenger between the microcontroller and the user. Whenever a button is pushed or a Braille is read, the SpeakJet will generate a robotic voice and deliver a message to the user to make sure the user is updated with his/her surrounding.

Mega32 The heart of BlindAid. This microcontroller receives data from Braille sensor and generates the sound output at amazing speed. (16Mhz) It also controllers different parameters of the BlindAid such as Volume.

Headphone In ear design, so clear sound can be transferred to the user without too much lost from noise.

IR Sensor The users guard dog. It generates a warning message to the user when the user gets too close to a wall in front of him/her.

Braille Sensor A combination of 6 NKK buttons. This is like users eye. It converts Braille to Binary data. (B2B) So the MegaL32 can process the data.

Memory We are using a 24AA1025. Each of them is a 1MB eeprom that is used to store words and their corresponding allophones

Design

We picked SpeakJet for our project because initially, we attempted to avoid the usage of external memory. Since SpeakJet contains all the allophones required for pronunciation, we dont have to stack the onboard eeprom with coefficients for allophone generation. We planned to use onboard memory for storing data required for speech generation.

The SpeakJet chip can be setup up in two ways. One for Demo/Test Mode and one for serial control.

In demo mode, the chip outputs random phonemes, both biological and robotic. We initially setup the chip to run Demo Mode in order to test it out. Afterwards, we connected the chip to the serial interface. We found that the lowpass filter indicated were unnecessary because the signal was clean and the lowpass filter only reduced the volume. Serial Data is the main method of communicating with the SpeakJet. The serial configuration to communicate with it is 8 bits, No-Parity and the default factory baud rate is 9600. These are the same settings used to communicate with the STK500 board. The Serial Data is used to send commands to the MSA unit which in turn communicates with the 5 Channel Synthesizer to produce the voices and sounds.

Parts List:

| Part | Quantity | Cost |

| NKK pushbuttons | 6 | Sampled |

| Custom protoboard | 1 | $13 |

| Sharp GP2Y0A02 IR sensor | 1 | $12.50 |

| 9V Battery | 2 | $4 |

| LED, capacitors, wires, resistors | Lab Supply | |

| Single ear headphone | 1 | $1 |

| SpeakJet chip | 1 | $15 |

| Large push buttons | 3 | Lab Supply |

| 24AA1025 1mbit Eeprom | 2 | Sampled |

| Speaker | $1.50 | |

| Total Cost | $47.00 |

For more detail: Braille reader using Atmel mega32