Abstract

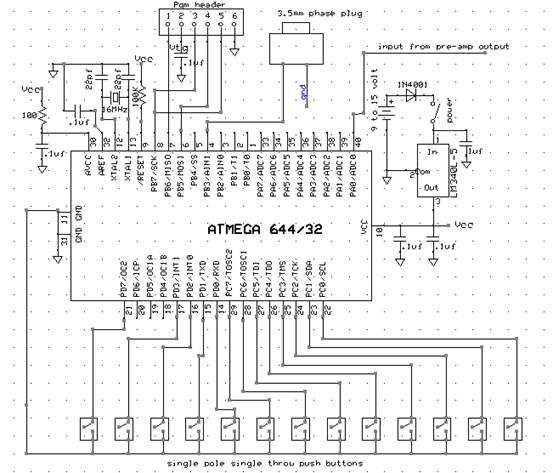

My final project was the design of a digital saxophone which can reproduce the sound of an actual saxophone through digitally synthesized electrical waveforms. The digital saxophone consists of a microphone to sense the user blowing into a mouthpiece, push-buttons to control the note to be played, and the Atmel644 Micro-Controller which processes the inputs and produces the digital output that sounds like a saxophone.

High Level Design

Overview:

There are several ways to digitally produce sounds, for example Karplus Strong, FM synthesis, and additive synthesis. The selection of methodology to implement depends on the type and complexity of the sound to reproduce. There are many instruments that have particularly designed shapes to give them their distinct tones; however, it is due to these ambiguities of structure which create the complicated harmonics and modulations that could be difficult to replicate precisely.

The way to digitally create the sound of an instrument is to reverse engineer it. You can play the instrument, have the sound pass through a microphone, scope the electrical signal, and analyze it. Once the data is collected, there are two approaches to view the signal, one is time-based and the other is frequency-based. In time-based, you can reveal certain qualities of the sound such as the attack of a note, which is the speed and shape in which a sound reaches its peak amplitude. An example of a sharp attack would be a bell, whereas a dull attack would be a tuba. You can also analyze the decay of a note, for example a violin has a slow decay while a drum has a fast one. In terms of the frequency-based analysis, every instrument has its unique set of frequency spectrums. Although a pure note has a single frequency (for example C4 is 262 Hz) an instrument also has components with different frequencies around it which gives it the rich sound. To accurately produce the sound of the desired instrument, all of those frequencies as well as their corresponding amplitudes must be addressed.

Synthesis:

Knowing that we must approach the digital synthesis of the sound from both time-based and frequency-based, I started from analyzing the time-based aspect of a saxophone sound. Below are the time-based response of three different type of instruments (woodwind, bell, drum):

As expected, the bell has a very sharp attack and an exponential like decay. The envelope I am interested in is the woodwind-like one, since a saxophone falls in the category of woodwind instruments. Thus, the amplitude of overall digital signal I produce would have an exponential rise, a steady hold value, and a smooth but quick exponential decay.

All these notes are played at 440Hz. The other bars represent the harmonics (integer multiples of the frequency of the desired note) around the 440 Hz note. This is a normalized representation, as you see the highest amplitude is equal to one, thus you can scale your own amplitudes simply to the ratios. For the saxophone we see that the first and second harmonics are strongest but, there are also 3rd, 4th and 5th harmonics. As I discovered during testing of the sound, these additional harmonics are crucial in contributing to the overall saxophone sound despite their smaller amplitudes.

Mouthpiece:

The mouthpiece of a saxophone is the source of the sound produced. Below is a dissection of the mouthpiece. On the bottom there is a thin piece of wood called the reed. As the player blows into the mouthpiece and apply pressure to the reed, the reed will vibrate in a way that allows airflow into the mouthpiece to resonate in a sin-wave like fashion.

To simulate this sound we needed a device that could sense the player blowing. Thus we used a microphone for this purpose. The microphone will input the users blow whereas the microcontroller will take care of the sound producing algorithm.

Since the microphone is omnidirectional, noise from all directions surrounding it will all be taken in by it.

ecause the microphones output is in the range of millivolts, we must amplify this signal before we feed it into the microcontrollers ADC. To achieve this, the following circuit called the pre-amp is built shown below (the above image also shows the preamp connecting to the microphone which is at the back tip of the cone).

The resistor above the microphone sets the bias current needed. I chose a 3.1kOhms bias resistor on top of the microphone for a 4.1V operating voltage well below the 10V maximum. A large AC-coupling capacitor (1 uH) follows the microphone stage to separate its bias point with the pre-amps. The pre-amps bias is set by the dual resistor which is both 5.1 kOhms to place the bias at half of Vdd, which is 2.5V. This is a non-inverting configuration with a gain of R2/R1. The amplifier I used is the LM358. I chose R2 to be 1MOhms and R1 to be 10kOhms for a gain of 100. This way the small signal output of the microphone can be amplified enough for the microcontrollers ADC to read.

Parts List:

Name | Supplier | Unit cost | Quantity | Total cost |

Microcontroller | ECE 4760 lab (Atmel 644) | $6.00 | 1 | $6.00 |

Microphone | ECE 4760 lab | $2.62 | 1 | $2.62 |

Pushbutton | DigiKey (SW820-ND) | $1.81 | 13 | $23.53 |

Op-amp | ECE 4760 lab (LM358) | $0.45 | 1 | $0.45 |

Foam board | Cornell Store | $11.99 | 1 | $11.99 |

Total | $44.59 | |||

For more detail: Digital Saxophone Using Atmega644