- Contents

- Introduction

- High Level Design

- Program/Hardware Design

- Results of the Design

- Conclusions

- Appendix A: Commented Code

- Appendix B: Schematics

- Appendix C: Cost Details

- Appendix D: Tasks

- Appendix E: Gestures

- References

- Introduction

Our project utilizes a microphone placed in a stethoscope to recognize various gestures when a fingernail is dragged over a surface.

We used the unique acoustic signatures of different gestures on an existing passive surface such as a computer desk or a wall. Our microphone listens to the sound of scratching that is transmitted through the surface material. Our gesture recognition program works by analyzing the number of peaks and the width of these peaks for the various gestures which require your finger to move, accelerate and decelerate in a unique way. We also created a PC interface program to execute different commands on a computer based on what gesture is observed.

We chose this project due to our interest in creating a touch based interface that can easily be used with any computer running Windows. A list of recognized gestures is included in the appendix.

- High Level Design

Rationale/Sources

The rationale behind our idea comes from the need for a simple and inexpensive acoustic-based touch interface. Although touch based interfaces, and in turn gesture based commands, have had a meteoric rise in popularity, the cost of touch based interfaces continues to be prohibitively expensive for large surfaces. Even if the cost is reasonable, installing such interfaces on existing hardware may simply be impractical. Furthermore, for many simple gesture based commands, traditional touch based interfaces’ fine resolution is simply unnecessary. The general theory behind our project can be used in many different applications. Our project is based on a paper we read by Harrison and Hudson (“Scratch Input”).

Background Theory

By scratching across a textured surface, a high frequency sound in the 3 kHz range is generated, which is different from most other possible sources of ambient noise. Many simple gestures have unique acoustic signatures because of the need to accelerate and decelerate in a particular fashion. For example, a straight line starts with a single acceleration motion followed by a single deceleration motion. Normally, the faster a gesture is executed, the higher the amplitude and frequency. Gesture recognition can be accomplished by placing a microphone against the textured surface and comparing the received acoustic signal with saved acoustic signatures. Intensity of the gesture can be deduced through the amplitude and frequency of the signal.

Logical Structure

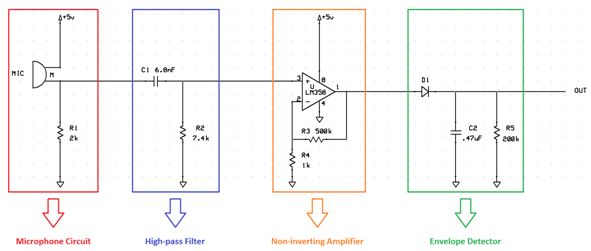

Figure 1 shows a high-level block diagram of all our project’s components. The microphone is used to get input from vibration on a surface. The analog circuit filters and amplifies this input and sends it to the MCU where gesture recognition takes place. Finally, the PC interface software performs specific actions based on the output of the MCU.

Hardware/Software Tradeoffs

Amplitude independent peak detection was initially supposed to be implemented in analog hardware. A hardware peak detector has the advantage of being faster and consuming no memory. Hardware would have been fast enough to incorporate our original idea of using time-of-arrival measurements between two microphones to locate the signals originating position.

However, all the peak detection circuits found were for steady state AC signals, not transient AC signals. Therefore, we switched peak detection to software. By moving peak detection to software, we did not have enough memory to sample at the rate fast enough for the speed of sound in wood and still retain the past 96 ms worth of data. Thus, we were forced to abandon our dual microphone idea.

Standards

Our design complies with IEEE’s RS-232 standard for serial communication. Character format and transmission bit rate are controlled by an integrated circuit called a UART that converts data from parallel to asynchronous start-stop serial form. Voltage levels, slew rate, and short-circuit behavior are typically controlled by a line-driver that converts from the UART’s logic level to RS-232 compatible signal levels, and a receiver that converts from RS-232 compatible signal levels to the UART’s logic levels.

Patents

After conducting a brief patent search we were not able to find any existing patents with similar techniques and applications as used in our project. However, we did find some patents related to speech recognition which is similar to the acoustic gesture recognition that our project relies on. One such patent is the “Low cost speech recognition system and method” (Patent number 4910784) which uses differences between the received speech and “reference templates” to recognize if a certain word has been said. This patent discusses their design where they used a “feature extractor,” a comparator, and a decision controller which is quite similar to the overall design of our project. However, this patent has recently expired and is now considered public.

- Program/Hardware Design

Hardware Design

Our hardware consisted of a fairly small circuit on a solder board, a microcontroller circuit, and a microphone inside a stethoscope. We packaged all of this into a small aluminum box which we made from sheet metal. Holes for connecters and switches were then cut out. The microphone is attached to standoffs which “push” the stethoscope on the surface which the box is placed on. Figure 2 below shows all our hardware and an image of our complete packaged project.

Parts List:

| Part Description | Quantity | Cost |

| Electret Microphone | 1 | $1.00 |

| Stethoscope | 1 | $8.00 |

| ATmega644 Microcontroller | 1 | Sampled |

| Max233 CPP | 1 | Sampled |

| Solder Board | 1 | $1.00 |

| Analog Circuit Components | N/A | Free |

| RS232 Connector for Custom PCB | 1 | $1.00 |

| LM358 | 1 | $0.50 |

| Header sockets | 2 | $3.00 |

| Power Supply | 1 | Salvaged |

| Aluminum for Box | N/A | Salvaged |

| Assorted Hardware (screws, standoffs, etc.) | N/A | Salvaged |

| Total | $14.50 |

For more detail: Gesture Recognition Based on Scratch Inputs