Introduction

The SearchBot is a fully functional model car that can be controlled wirelessly through the PC or autonomously search for red balls scattered on a flat surface.

Autonomous vehicles are just now being realized in labs around the world and will soon have major military and commercial potential. The Adaptive Communications and Signals Processing (ACSP) Group of Cornell University is studying the control of autonomous vehicles in sensor network systems and have asked us to contribute a robot vehicle to their research. The end result is the SearchBot, a car that can both be controlled by a user or autonomously search for red balls. In Controlled Mode, a user inputs an angle to rotate and distance to move on a PC terminal and the vehicle, which wirelessly receives the request, moves accordingly. In Autonomous Mode, the robot utilizes an effective searching algorithm to locate red balls on the floor. Upon finding one, the SearchBot pushes it back to a central base and continues searching for others. This project utilizes a commercial robot chassis, a PDA for wireless communication and an optical camera for vision. The SearchBot is an exciting application of many different electrical engineering disciplines and illustrates the practicability of using microcontrollers to making autonomous robots.

High Level Design

Rationale:

The SearchBot originated as a request proposed by Professor Lang Tong for his sensor networks research. He needed a vehicle that can be controlled by a Matlab script on the PC to move around in a sensor field. We were intrigued by this idea but were interested in having the vehicle perform some task autonomously instead because it would be more challenging and applicable. Thus, we created the SearchBot, a vehicle that seeks out red marbles on the floor and moves them to a designated spot called the base. This vehicle will also be able to be manipulated by the PC in a different mode so it pertains to Professor Tong’s research. The SearchBot was an interesting project idea because it combined a variety of different interesting ECE topics together into one coherent and practical application. We needed to research computer vision, control systems, embedded C++ and wireless communication in the process of planning and implementing our project, all of which were foreign to us before this class.

Logical Structure:

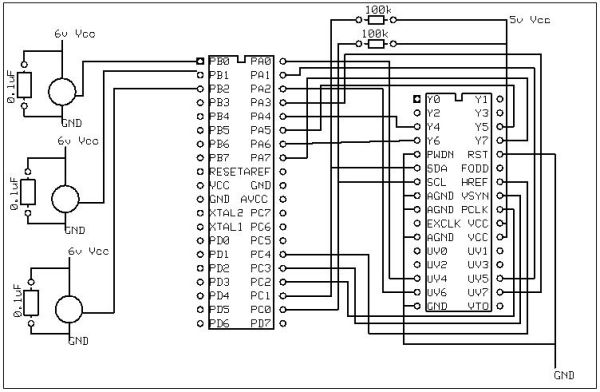

The SearchBot is a complex system that has many inputs and outputs connected to a Mega32 microcontroller, which serves as the central control unit. In order to simplify planning and keep construction efficient, we segmented our project into various subsystems that communicate solely with the MCU. This greatly benefited us both in design and during testing because it allowed us to focus on each component individually with adding unnecessary complexity. We identified the three main components of the SearchBot to be the PC/PDA/MCU communication link, optical camera sensor, and servos controlling the vehicle. Figure 1 highlights how information and control propagates through the system.

In order to run the SearchBot, the user turns on the PC, PDA and microcontroller. An ActiveSync connection must be made and maintained between the PC and PDA. The user runs a issues commands through a Matlab script, which is propagated through the PDA to the MCU using RS232 serial. The Mega32 processes these commands and the car responds accordingly.

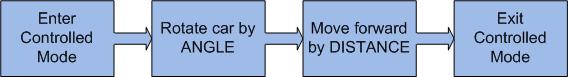

Controlled Mode: This mode allows a user to run a Matlab script to control the movement of the robot. An angle and distance are sent to the MCU as inputs and the car moves consequently based on its calibration. Note that it is probably preferable to adjust the car in Calibration Mode before using the car for precise movement. The high level proposal for Controlled Mode is as follows:

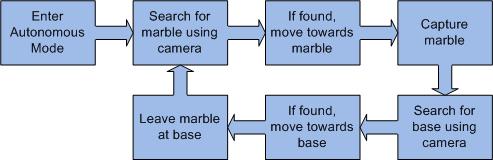

Autonomous Mode: This mode allows the car to move freely on its own and sense its surrounding using an optical camera. When the user initiates Autonomous Mode, the car immediately turns 300o while scanning for red. This makes it important for the area to be completely free of red to avoid false triggers. If the target color is not found, the car moves a foot and does another vision sweep. This continues until the SearchBot locates a red object. The vehicle then locks onto the object and move towards it, scanning the camera and making dynamic adjustments to its motion. Upon reach the target, the car captures it using a catchbin attached to the front of the chassis. The robot then searches for a base identified by green. We made the base large so it is easily discovered. The SearchBot pushes the ball to the base and then moves back to release it. The process is then repeated until the user enters a new instruction. Autonomous Mode is illustrated by the following graphic:

Calibration Mode: in order to convert a distance or angle input into a time to turn wheels, scaled variables are needed. We found these variables changes with battery voltage, floor surface friction and weight on the car so it is handy to have a way to change these values. A separate mode on the car is set these calibration variables and have them saved to EEPROM.

Software/Hardware Tradeoffs:

This project depends heavily on both software and hardware but few tradeoffs exist. The SearchBot is heavily hardware-driven because both the car itself and its optical sensor are hardware components. Car control and image extraction, meanwhile, were inevitably software-oriented. In hindsight, the only tradeoff we made was in the way that we implemented wireless control on the SearchBot. Rather than building a complex RF communication system, we reasoned it was practical to utilize a PDA’s WLAN capabilities and just program the communication protocols necessary between the individual components. In the end, this saved us a lot of time and was a logical (but expensive) decision.

Standards:

This project utilizes many components that interact with each other and conforms to the standards set for the different communication protocols. The PDA uses the RS-232 serial standard to interact with the Mega32. The camera connects to the microcontroller using the I2C standard. Additionally, we used the 802.11b Wi-Fi standard to transfer information between the PC and PDA. A final standard used was RGB color encoding in the camera.

Patents and Legal Considerations:

The SearchBot is an academic not-for-profit endeavor and does not infringe on any copyrights or patents. We referenced some open-source coding and referred to other academic projects that used the same optical camera but these sources are cited and credited in this report. We bought or sampled all of our hardware components and are using them within the realm that they were designed for. The entire design and construction aspects of the project were our own.

Program/Hardware Design

The SearchBot includes many subsystems that must be developed individually and then integrated together. The communications portion of the project involves transferring information wirelessly from the PC to the MCU. A novel way to implement this was to utilize a PDA to serve as the middleman and thus separate communication links were written for PC/PDA and PDA/MCU connections. Moreover, the car needs a robust state machine in order to move around, search for balls and obey commands from the user. Finally, there is the challenging aspect of reading from the optical sensor and completing the fast imaging processing necessary to help maneuver the car in autonomous mode.

Parts List:

| Part | Cost | Supplied by |

| Acroname PPRK robot vehicle | $325.00 | ACSP group |

| Dell Axim x51 PDA | ~$500.00 | ACSP group |

| PDA RS232 Serial Connection | ~$50.00 | ACSP group |

| OV6620 optical camera | $43.65 | ACSP group |

| Batteries (9V and 4 AA) | $0.00 | ACSP group |

| Mega32 microcontroller | $8.00 | ECE476 lab |

| Custom PC board | $5.00 | ECE476 lab |

| Solder board | $2.50 | ECE476 lab |

| Max233CPP | $7.00 | ECE476 lab |

| RS232 Connection | $8.00 | ECE476 lab |

| Wire, capacitors and resistors | $0.00 | ECE476 lab |

| Aluminum | $0.00 | Scrap |

| Red Balls | $0.00 | Scrap |

| Green Poster Board | $0.00 | Scrap |

For more detail: SearchBot Using Atmel Mega32