Introduction:

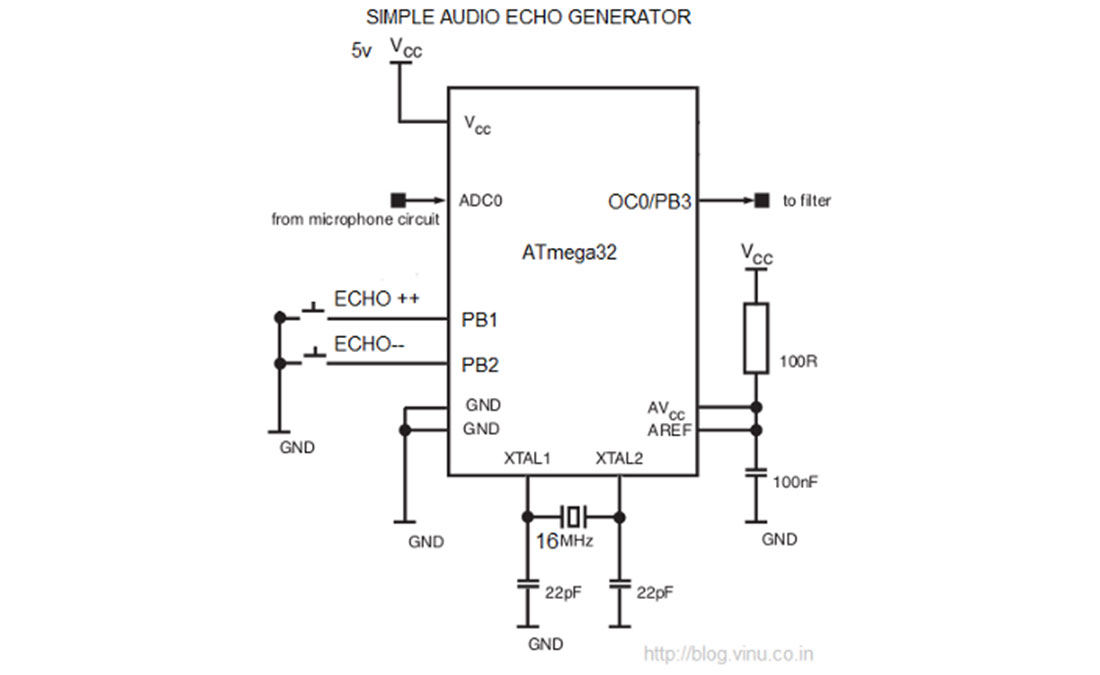

But now I can do this very easily by a simple digital signal processing using a microcontroller. It’s concept is very simple, ie we need to apply a proper delayed feedback in digital samples with in a circular buffer. I did this using an atmega32 microcontroller and it worked fine. This is simple but really an interesting project. Not only an echo, but we can do a lot of fun with this type of small DSP experiments if we have considerably large RAM in the mcu…

Now the ADC module inside the AVR will convert the analog signal to digital signal at a particular sampling rate(which we can decide). Now a 1900byte circular buffer is introduced. Our first aim is to make a delay in input and output audio. So, we can do one thing, ie we can populate the buffer from one end and we can read the buffer from another point so that there will be a delay! So how to make this delay to it’s maximum? I think it will be better to explain it via a diagram. For More Detail: Generating AUDIO ECHO using Atmega32 microcontroller

Now the ADC module inside the AVR will convert the analog signal to digital signal at a particular sampling rate(which we can decide). Now a 1900byte circular buffer is introduced. Our first aim is to make a delay in input and output audio. So, we can do one thing, ie we can populate the buffer from one end and we can read the buffer from another point so that there will be a delay! So how to make this delay to it’s maximum? I think it will be better to explain it via a diagram. For More Detail: Generating AUDIO ECHO using Atmega32 microcontroller